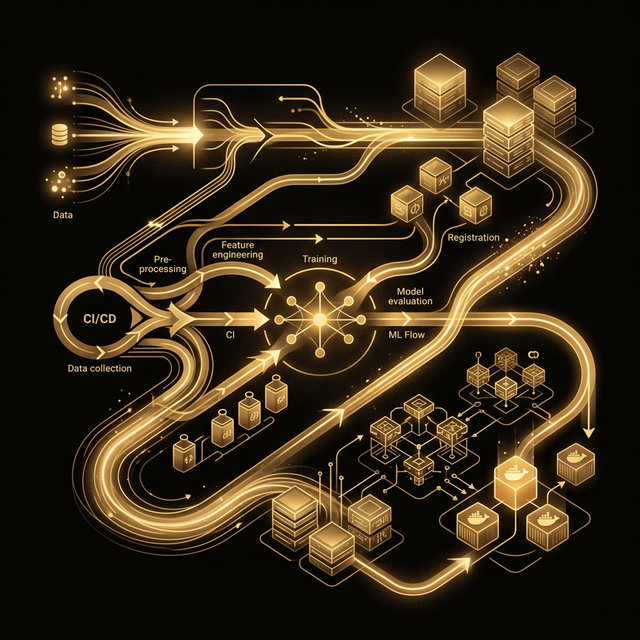

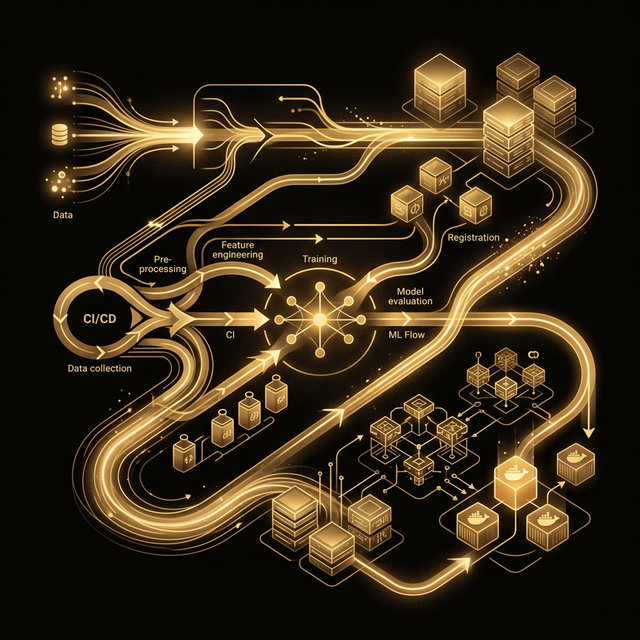

Production ML Pipeline

End-to-end LLM pipeline with full observability, model versioning, and zero-downtime automated deployment via CI/CD.

End-to-end LLM pipeline with full observability, model versioning, and zero-downtime automated deployment via CI/CD.

The client had a working AI prototype but no path to production. Models were deployed manually, there was no versioning, no monitoring, and every update risked downtime. They needed a battle-tested MLOps pipeline.

I designed and implemented a complete production pipeline — from model registry and automated testing through containerized deployment with health checks, rollback capability, and real-time performance monitoring.

A robust, automated pipeline from code commit to production deployment.

Developer pushes code or model update. GitHub Actions triggers automated pipeline.

Unit tests, integration tests, and model evaluation benchmarks run automatically.

Docker image built with pinned dependencies. Tagged with version and pushed to registry.

Canary deployment to staging. Health checks validated before promoting to production.

Prometheus scrapes metrics. Grafana dashboards. PagerDuty alerts on anomalies.